4. A Visual proof that neural nets can compute any function

Micheal Nielson, Jan 2016

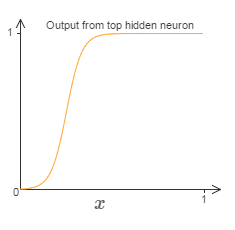

The neural networks can compute for any complicated function. Though the networks cannot be used to exactly compute any function, an approximation for the function is obtained. Increasing the number of hidden neurons improves the approximation.

It is necessary to understand how the network works and how the values chosen in weights and bias impact the network structure. The shape of the graph does not change regardless of the increase or decrease in bias value.

It is necessary to understand how the network works and how the values chosen in weights and bias impact the network structure. The shape of the graph does not change regardless of the increase or decrease in bias value.

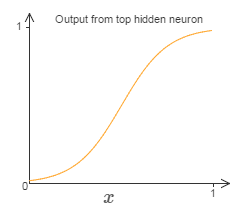

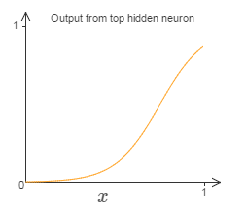

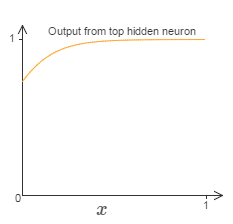

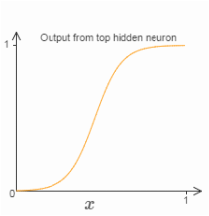

decreasing the value of the weight broadens the curve . A steep curve is obtained by increasing the weight.

The hidden neurons try to output step function. By adjusting the weight and bias the step function can be obtained, usually the weight is set to large value. The position of the step is directly proportional to bias(b) and inversely proportional to weight(w).

By knowing how the values have impact in the network structure. The values can be chosen to get approximation for any function.The neural networks are best adapted to learn any functions useful in solving many real world problems.

By knowing how the values have impact in the network structure. The values can be chosen to get approximation for any function.The neural networks are best adapted to learn any functions useful in solving many real world problems.

RSS Feed

RSS Feed